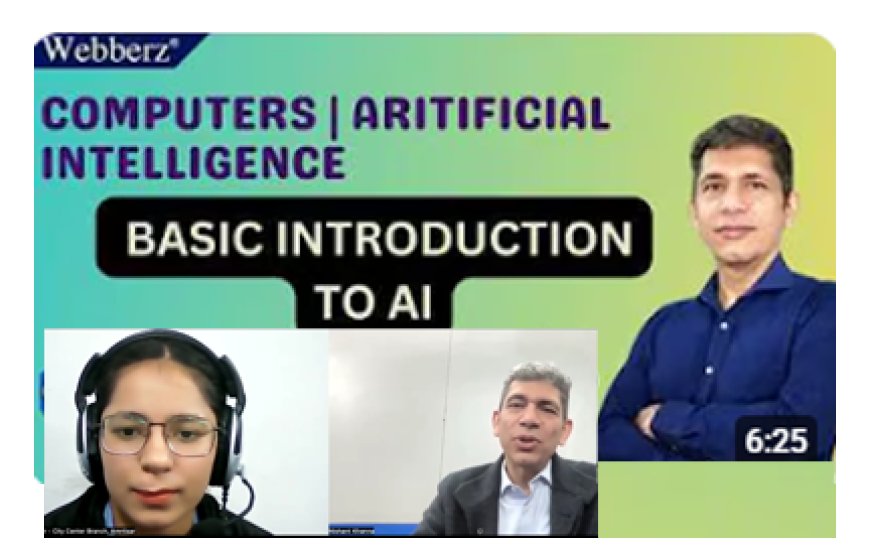

What is Artificial Intelligence | Basic Introduction to AI | Riya Arora Computer Trainer webberz Amritsar

Riya Arora Computer Trainer webberz Amritsar Meaning of Artificial Intelligence ✔️ Role of computers in AI. ✔️ Everyday examples of AI. ✔️ Importance of AI. ✔️ Beginner-level explanation of AI. From Riya Arora Computer Trainer Amritsar.

Riya Arora Computer Trainer webberz Amritsar

In this video from Webberz, Riya Arora Computer Trainer explain the basics of Artificial Intelligence (AI) in a simple and student-friendly way. Discover what AI is, how computers use it, real-life applications, and why AI is shaping the future of technology. This session is perfect for school students, beginners, and anyone starting their journey into computer science and AI

Meaning of Artificial Intelligence

✔️ Role of computers in AI

✔️ Everyday examples of AI

✔️ Importance of AI

✔️ Beginner-level explanation of AI

From Riya Arora Computer Trainer webberz Amritsar.

- Input: You feed it a giant pile of information (Photos, Numbers, or Text).

- Processing: The computer finds the "invisible threads" or patterns connecting that information.

- Output: When you ask a question, it uses those patterns to give you the most likely answer.

- Narrow AI (What we have now): This AI is a "pro" at one specific thing—like playing chess or translating languages—but it can't do anything else.

- General AI (The future/Sci-Fi): This would be an AI that can learn and do anything a human can. It doesn't exist yet.

JatinderBedi

JatinderBedi